News and Lies 2:

News and Lies 2:

On post-truth

“What happens when anyone can make it appear as if anything has happened, regardless of whether or not it did?” technologist Aviv Ovadya warns. — Charlie Wartzel

A recent article by Charlie Wartzel summarizing the perspective of Aviv Ovadya has become quite popular. He admits that to scare-mongering, but justifies this by claiming that the situation is bad enough that we all really should be scared. Certainly, some of the details are accurate, but — as par for the course for a popular take on a newly-relevant subject with a long history — there’s a great deal of context missing.

Let’s first add a little historical context to defuse a bit of the appeal-to-authority that propels this article. Ovadya is described as having “predicted the 2016 fake news crisis,” on the grounds that he made a presentation about it in October of 2016. This is a very low bar: the political ramifications of propaganda circulated within social-media-amplified cultural bubbles was a hot topic throughout 2016, the same way it was at the tail end of Obama’s first term, when the publication of The Filter Bubble coincided with concerns about right-wing conspiracy theories. The Filter Bubble didn’t invent these concerns, either — that book was a (perhaps independent) rehash of concerns about internet news expressed on various mailing lists as early as 1992.

The term of art back then for what we now call ‘filter bubbles’ was ‘the daily me’ — as in, a hypothetical newspaper that, based on personality profiling, shows the user only the news stories they want to see. We can probably trace the idea back even further; however, I first became aware of the ‘daily me’ concept back in 2008, in a lecture at the Computers, Freedom, and Privacy conference — and I was one of the few attending who wasn’t already familiar with the concept, meaning that in the community at the intersection of tech and social justice, the political ramifications of fake news on social media was old news ten years ago. Ovadya’s epithet could be applied to anyone who was reading political coverage in mainstream news outlets in 2016 just as well as it could be applied to him, so his authority is not, by that metric, meaningful.

In the absence of the authority of someone who “predicted the fake news crisis”, we can critically re-examine the claims being made.

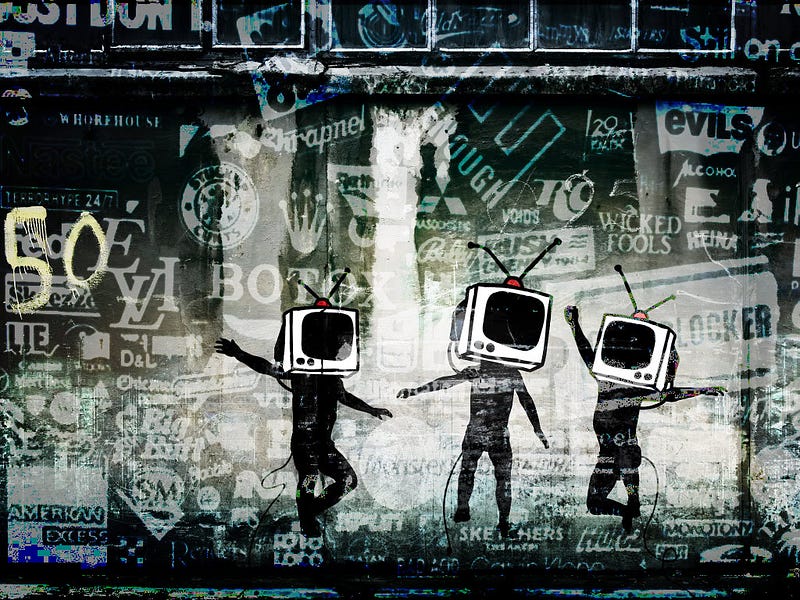

The narrative Ovadya & Wartzel paints is one where a fixed, stable, universally accepted common ontology is being eroded by tech that manipulates flows of information while simultaneously making forgery easier. This perspective is shortsighted — it doesn’t match history, and depends upon some dubious assumptions about the homogeneity of culture.

Humans don’t live in reality, and we never have. We live in networks of personal mythology, occasionally shaped and guided by reality’s physical limits, on the rare occasions when those physical limits interfere with our ability to maintain false beliefs in ways that are not easily ignored. Our personal mythologies are a bricolage of (potentially internally conflicting) heuristics and factoids, collected from all the people and media we interact with.

For a few hundred years, due to the standardization of educational systems around canons of works deemed important, large groups of powerful people (the rich, the intelligentia, royalty, etc.) had substantially similar personal mythologies. A literate westerner in the late eighteenth century could be reasonably expected to be deeply familiar with the bible, classical mythology, the works of Plato and Aristotle, and a handful of other works, along with being able to read and write in Latin (and probably French), regardless of their homeland. A literate westerner a few hundred years earlier would have probably been a monk who had studied the Trivium and the Quadrivium. From what I understand, similar highly standardized educational systems existed in China, established far earlier, and this system was geared toward state bureaucracy rather than religious institutions — however, I am not familiar enough with this system to describe it in detail.

The most important aspect of this situation is that it was not extended to most people — the poor and illiterate had their own private oral mythologies, influenced but not fully controlled by religious and state institutions. Improved printing technologies — cheap enough to use for mass entertainment and education but expensive enough to require a surrounding institution — universal mandatory education, and various attempts at education standardization exposed a greater number of people to particular memeplexes favored by particular groups of powerful people.

It’s important to note that, in the United States, this process was part of a then-politically-radical democratization made possible by access to low-cost printing technologies by dangerous terrorist subversives like Benjamin Franklin. When the revolutionary war was won, printers switched gears from propaganda pamphlets and broadsheets to general material for the education of a population who needed to be brought up to the minimum standards people like Thomas Jefferson thought were necessary to keep a democratic system from falling into tyranny. While today we generally see this process as a good idea, we should recognize that in the eighteenth century in europe and the americas, popular vote was seen as two steps short of anarchy and mass education was not seen as a universal good: from an outside perspective, we’re talking about dangerous political radicals determining the canon of an education system.

Of course, books were still quite expensive until the 20th century, with the introduction of paperback ‘pocket books’. The 20th century also corresponded to the development of film, radio, and television — popular formats that, like book and newspaper printing, depended upon expensive technology and institutions, and therefore were broadcast. This is the first point at which we can say that people’s personal mythologies began to mostly converge: the point at which a handful of national TV channels, a handful of nationally-syndicated radio networks, a handful of large movie studios with strict control over theatre chains, a handful of big newspaper companies and book publishers, a standardized education system, and a very active censorship bureau controlled much of media. This period could be bookended on one side by the beginning of the Great Depression (when movie theatres became cheap mass entertainment) and on the other by the late 1960s, when new limitations on post office censorship and widespread access to Xerox and Mimeograph machines made a mass non-broadcast culture feasible — what we call, variously, faxlore or zine culture.

The development of online communities starting in the 80s can be seen as an extension of this anti-broadcast trend that I trace to the late 60s. There’s a big overlap between early online culture and faxlore, ham, and CB radio culture, after all. This development has never been apolitical: as soon as scalable alternatives to broadcast culture appeared, people began to take advantage of it to create and distribute their own personal mythologies, and these mythologies have often had a political element — as with the development of discordianism starting in 1958, the radical political zines and newsletters on the left right and radically unseen sides through the 60s, punk zines in the 70s and 80s, and the faxlore origins of the proto-alt-right in the early 90s with anti-Clinton xeroxed ‘factsheets’. These strains made the jump first to Usenet and BBS, then later to the web.

All of this is to say that, rather than a sudden assault on the edifice of consensus truth, we are looking at the tail end of a sixty-year return to equilibrium — the conclusion of an anomalous century of relatively-homogeneous consensus reality.

Usenet and the web did something that BBSes (outside of store and forward networks like fidonet) and zines largely could not — they deterritorialized or delocalized exposure to alternate realities. People like John Perry Barlow and McLuhanist media theorists put this in utopian terms, and the culture jamming movement put it in functional, operative terms. After all, the broadcast reality is often wrong, and sometimes intentionally so: fraud, being expensive, was the domain of the powerful, and the democratization of the means of fraud (or, if you prefer, the democratization of disinformation construction and distribution mechanisms), was seen as a net positive. Culture jammers hoped that the good lies and the bad lies will cancel each other out in open forum.

When we talk about filter bubbles, the problem is not that such alternate realities exist. Instead, geographically-dispersed clusters of alternative cultures remain isolated from each other as a side effect of ranking algorithms. These cultures, which until the 80s corresponded to regions, now can cross state boundaries in difficult-to-trace ways. Because representative government is based on geography rather than psychography, this disrupts attempts to consolidate political power: it’s very difficult to gerrymander around a primarily internet-centric culture in such a way that a guaranteed win is possible.

Filter bubbles produce very real problems. The human capacity to ignore physical reality is impressive — only rarely does even mortal danger shake us, or else no veteran of active combat duty would consider themselves religious or patriotic, except perhaps in fairly warped ways, such as belief in a sadistic or blind-idiot god, or faith that no alternative exists to a zero-sum politics of spherical annihilation. Nevertheless, in less extreme situations, periodic challenges to our umwelt can indeed cause gradual change, and heavy exposure to diverse and conflicting alien myths can cause us to critically reconsider our own mythologies. Lack of exposure to alien myths means that the alternate realities produced by filter bubbles are just as stable as those previously produced by geography.

When we are familiar with the perspective of the ‘other side’, we can accurately distinguish between likely and unlikely stories — we can identify disinformation, even if that doesn’t impact our willingness to spread it. But, constant and consistent exposure to the same material eats away at our critical response. Furthermore, simply pointing out that some stories are false has unintended consequences.

So, it’s vitally important that we retain that exposure. However, at the same time, we should not assume that such exposure will avenge some mythic edifice of consensus truth. An (often justified) contrarian strain acts against the consensus, and the centralized power necessary for building the illusion of consensus can reasonably be expected to use that false consensus to bolster its own continued power.

Wartzel’s article highlights DeepFace, AudioToObama, and similar technology as mechanisms for supporting widespread fraud in the near future. I have a couple problems with these specific examples (in part because the technology is far from convincing, and in part because the limited scope of these projects and the existence of other related technologies means that they don’t substantially lower the cost of believable fraud), but on a fundamental level, fraud is always a possibility and our sense of what constitutes reliable evidence depends on a folk-understanding of fraud technologies. Nobody could use these technologies right now to convince a layman, let alone an expert, but the hype and scaremongering around them means that in the near future video will have the same status as photography in terms of perceived reliability — in other words, considered easily-faked.

The ultimate result of this — since video fraud is still approximately as expensive as it was 20 years ago — is that more skepticism will be expressed about ‘video evidence’, and that skepticism will be expressed earlier. This will ultimately probably mostly impact the people who have the resources and motivations to actually fake video evidence. It’s possible in the short term for people to take advantage of existing cultural bubbles to manipulate this skepticism toward political ends, but in the longer term we’re merely adapting to the state of the reliability of video evidence in the past several decades.

The time in which we live is unprecedented not for the unreliability of evidence but for its reliability. Again, we slowly return to equilibrium as political and technical competition takes advantage of short-term differences between the perceived and real reliability of certain kinds of evidence. There are real dangers associated with this manipulation, but they are not dangers to capital-T Truth but mundane ones — everyday grifting, political spin, and propaganda. Our best tool against this particular variety of manipulation is to maintain the accuracy of our folk-ideas about evidence reliability, not to demonize toys as existential risks.

Framing is very important. Where I agree with Wartzel and Ovadya is that there are serious systematic problems with the way we route information between people — problems that cause political schismatism, failures of empathy, and in some cases direct physical danger. However, Ovadya and Wartzel’s framing of this problem as one of attacks on consensus reality implies a solution with unfortunate authoritarian tinges: the reconsolidation of power over information.

Instead, I suggest framing the problem as systematic bias in exposure to information, in ways that limit the effectiveness of our normal intellectual growth. Rather than rebuilding the tower, we should be breaking down the walls.

Update: February 20th 2018: Wendy Grossman on the RISKS mailing list brought to my attention that the term ‘Daily Me’ was credited to Nicholas Negroponte, related to his work on early electronic newspaper technology at the MIT Media Lab. In 1995, Fred Hapgood, writing for Wired, claimed that Negroponte had floated the idea as early as the 1970s, and points out a quote from a 1968 book considering a related idea.